Summing Up: Container Image Building

by Puja Abbassi on Feb 24, 2020

As we draw a line under this series about the state of the art of container image building, it’s worth taking a moment to reflect on what we’ve discovered.

In contrast to the early days of the containerization trend made popular by Docker, there are many more players in the container image building game. We certainly haven’t looked at them all!

However, each tool exists as a result of the perceived inadequacies of the original container build experience provided by Docker. And each has a unique angle, whether it’s commercial or technical in nature.

Did you know? We’re on Medium too! Follow us and never miss an informative article like this.

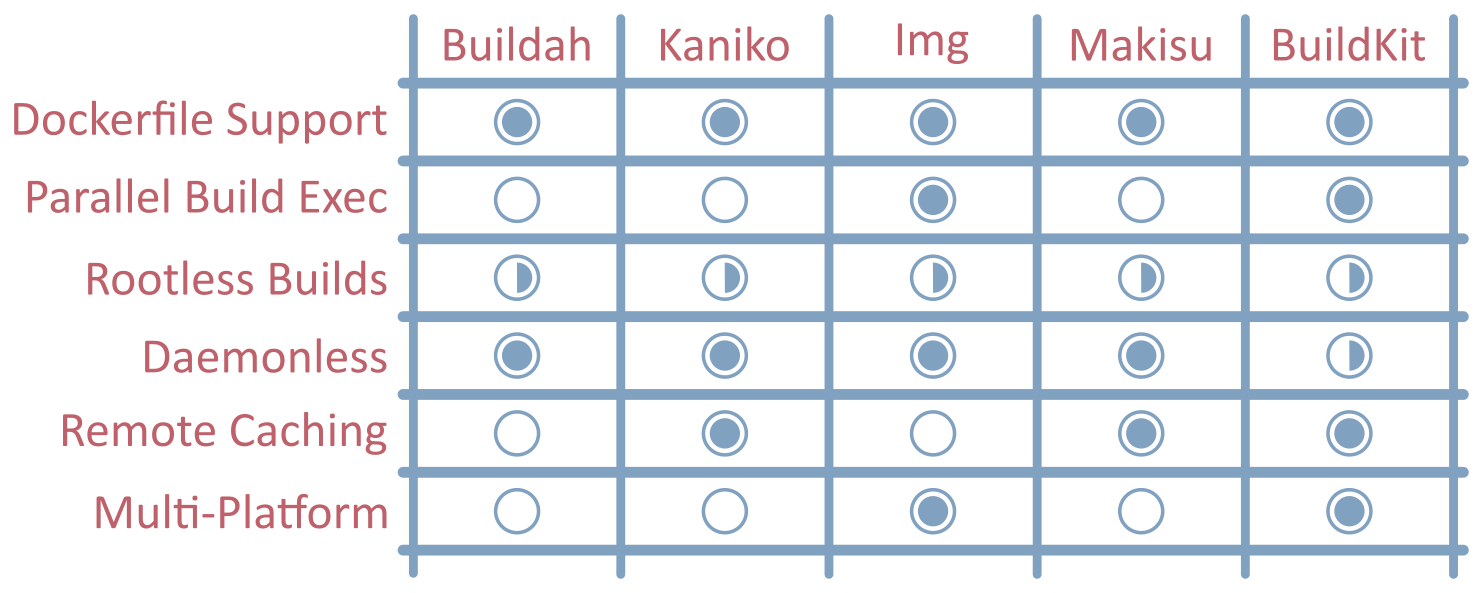

Dockerfile Support

What’s particularly interesting is that each tool still supports (if not, promotes) the Dockerfile as a declarative means of describing container images.

This is a testament to the simplicity provided by the “docker build” experience, the popularity of the official Docker images, and the ubiquity of container images built this way that are out in the wild. Sure, there are other approaches that don’t use the Dockerfile, but it seems like the Dockerfile is here for some time to come.

Rootless Builds

The increasing adoption of Kubernetes as the home for container workloads has had a big bearing in the direction of travel for image building.

Mounting the Docker socket in a pod, or running privileged build containers (DinD) provided a less than optimal experience. Especially for those with a need for a strong security stance. Today, rootless and non-privileged builds are possible but require some sub-optimal configuration or tweaks in the hosting environment. To get to the current state of affairs required persistence in getting changes merged into the Linux kernel, the Docker Engine, and Kubernetes. However, there is more to do to make the process truly secure without the need for unwarranted privilege.

Daemonless

Container image building in Kubernetes has also driven the advances in daemonless builds, with the dependency on the Docker daemon slowly becoming a thing of the past.

All of the tools considered in this series are daemonless, although BuildKit has to be run as an ephemeral daemon to achieve the same effect.

Reducing Build Times

Developers are constantly looking for ways to speed up container image builds as they iterate during application development.

BuildKit wins hands down with its concurrent dependency resolution, with impressive performance when compared with the competition. However, optimal build cache use is also a key factor in build durations. Remote and distributed build cache techniques are increasingly becoming important, especially when it’s unclear which host a build task will end up on. This is an area that has received a lot of attention in the majority of the new image build tools.

Architectures

Finally, whilst most applications are targeted for x86_64 architectures, as the adoption of paradigms like edge computing ramps up (fuelled by IoT), image builds need to accommodate different CPU architectures. BuildKit (and by implication, Img) provides some support for cross-platform images, but the other tools have some catching up to do.

Conclusion

Container image building has come a long way since the early build experiences provided by the Docker engine.

Whilst the progress plateaued for some time during the phenomenal growth of containerization, the cloud-native community has stepped up and started to innovate in order to fix the many deficiencies. It has resulted in multiple solutions, originating from different corners of the cloud-native landscape. At one point in time, we might have been concerned about the splintering of effort and approach. But, the different solutions will suit different organizations and different use cases. And, as consumers of container images, it really doesn’t matter which tool was used to build a particular image. Provided a built image conforms to the OCI image specification, a derived container is always going to function in the way we expect.

It will be interesting to return to this topic in a couple of years to see how things have changed. But, in the meantime, enjoy building your container images, and don’t forget to give back to the community!

Tweet us @giantswarm and let us know your thoughts.

You May Also Like

These Related Stories

Container Image Building with BuildKit

In the final article in this series on the State of the Art in Container Image Building, we return to Docker’s Moby project where it all started and a …

Building Container Images with Img

Img is an open source project initiated by one of the most famous software engineers in this space, Jessie Frazelle, in response to the demand for dae …

Time to Catch a New Train: Flatcar Linux

If you’ve been following developments in the container space, you probably know that CoreOS Container Linux is reaching end of life. The good news is …